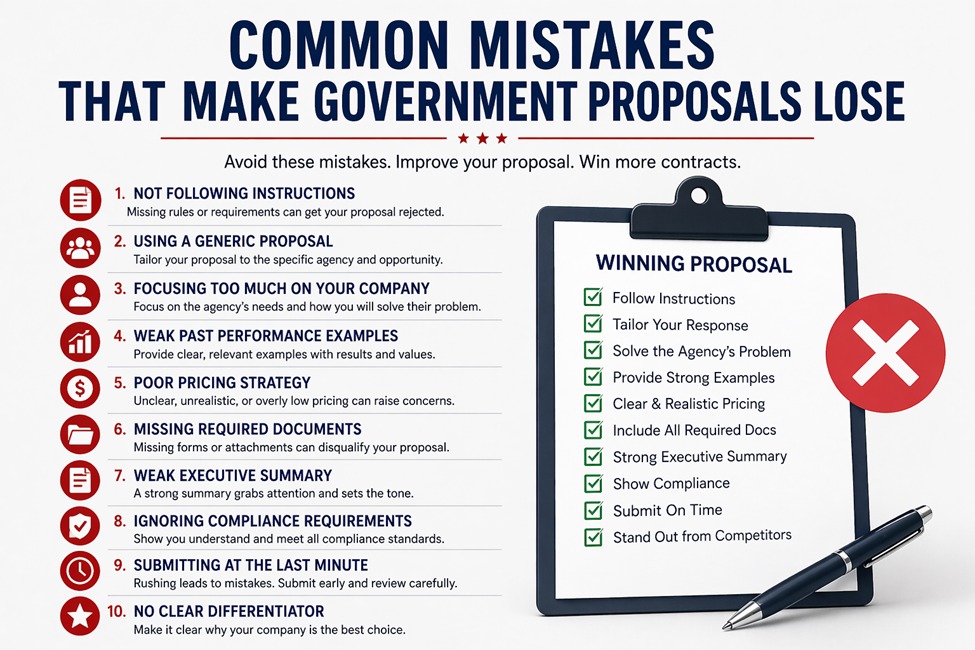

Federal evaluators don’t “skim and vibe.” They score. And the fastest way to lose is to hand them reasons to deduct points or toss your bid. Here are five proposal-killers we see again and againand the concrete fixes that keep you in the game.

1) Treating Section L Like a Suggestion

The mistake:

Great story, wrong format. Teams ignore page limits, blow past font rules, bury required tabs, or submit the wrong file types. Some even miss the separate volumes or forget a signed form. That’s how compliant competitors win by default.

How to avoid it:

- Build a compliance matrix on day one. Map every “shall/must” to a page, heading, and owner. Update it with every amendment.

- Mirror the RFP. Use the same volume order, section numbers, and table names so evaluators can trace compliance without hunting.

Run a “format scrub.” Before Red Team, have a ruthless editor check fonts, margins, file names, bookmarks, and tab structure. - Lock a production checklist. Include signatures, reps & certs, SF forms, J.P. Morgan text boxeswhatever’s required. No checklist, no submission.

2) Repeating the SOW Instead of Explaining the “How”

The mistake:

Parroting requirements with generic promises: “We will implement best practices and ensure world-class quality.” That’s filler, not proof. Evaluators want to know how you’ll do the work, with steps, resources, and controls that cut risk.

How to avoid it:

- Write to the verbs. For every requirement, answer: Who does it? With what tools? On what cadence? What inputs/outputs?

- Tied to performance measures. Show how your approach meets the PWS metric throughputs, accuracy, uptime, and response times.

- Add mini-playbooks. One-page workflows for onboarding, change control, incident response, and transition cutover.

- Quantify benefits. “Automated provisioning reduces onboarding time from 10 days to 48 hours,” beats “efficient onboarding.”

3) Weak, Irrelevant, or Unverified Past Performance

The mistake:

Submitting big, shiny contracts that don’t match the scope or environment, or citing results you can’t back up. Evaluators value relevance and recency, and they check.

How to avoid it:

- Curate, don’t collect. Pick projects that match the agency, tech stack, security posture, data volume, and complexity if they’re smaller.

- Map relevance explicitly. A short table that aligns each past performance to the RFP tasks and risks helps evaluators score faster.

- Get permissions early. Secure POCs and letters of intent for key personnel and subs so nothing collapses in due diligence.

4) Price That Doesn’t Match the Story

The mistake:

A technical approach that needs 8 FTEs, priced at 5. Or travel assumptions that don’t match the schedule. Or rates so low they trigger cost realism concerns. Misaligned pricing screams risk.

How to avoid it;

- Build a Basis of Estimate (BOE). Show workload drivers, assumptions, labor categories, hours by phase, and tool costs.

- Traceability is king. Every task in the approach should show up in the BOE and the CLINs. No orphan tasks, no orphan dollars.

- Scenario-test. If the RFP has surge or optional tasks, price the underlying capacity and explain how you’ll scale.

- Check rates for realism. Use current market data and escalation aligned to the Period of Performance. If you discount, explain why (IP, automation, incumbent efficiencies).

5) Ignoring Risk (or Hiding It)

The mistake:

Pretending everything will run perfectly. Evaluators expect issuesclearances, data migration, legacy integrations, staffing, and cybersecurity. If you don’t name them, you look naïve.

How to avoid it:

- Publish a risk register in the proposal. For each major risk: probability, impact, mitigation, owner, and early warning indicators.

- Turn mitigations into strengths. Bench capacity, pre-cleared candidates, dual-run cutover, automated testing, and DR drill tie each to reduce downtime or compliance lift.

- Add SLAs with teeth. Offer credits or remedial actions that matter. It shows confidence and lowers perceived risk.

- Show governance that works. Name the roles, cadence, artifacts (dashboards, QBRs, change logs), and escalation paths.

Quick Wins That Raise Your Score

- Write to Section M. Start each subfactor with a one-paragraph “compliance and strengths” summary. Make the board’s job easy.

- Use evaluator-friendly visuals. Responsibility matrices, RACI for interfaces, Gantt for transition, and architecture diagrams with labeled controlsclean and legible.

- Call out strengths explicitly. If allowed, use bold callouts like “Strength: Automated compliance dashboard cuts audit prep by 40%.”

- Tailor resumes. Mirror labor category quals, list relevant tools and clearances, and cap bullets at measurable results.

- Color team, like you mean it. Pink for clarity, Red for scoring gaps, Gold for executive polish. Each review needs a distinct mission and a cut list.

Common Traps to Avoid

- Copy-paste culture. Recycling boilerplate that mentions the wrong agency or policy signals sloppiness.

- Late teaming. Sub-Ks, NDAs, and OCI mitigations hammered out at the end create gaps and missed dependencies.

- Amendment whiplash. Page limits, forms, and due dates change. Freeze your matrix after each amendment and re-verify every volume.

- Buzzword soup. “Cutting-edge,” “synergy,” “paradigm” none of these earn strengths without metrics and mechanisms.

Bottom Line

Winning proposals are compliant, traceable, and low-risk. Follow Section L like a checklist, write to Section M like a scorecard, prove relevance with hard numbers, and make your price tell the same story as your approach. Do those four things consistently, and you’ll stop losing on avoidable mistakes and start winning on purpose.